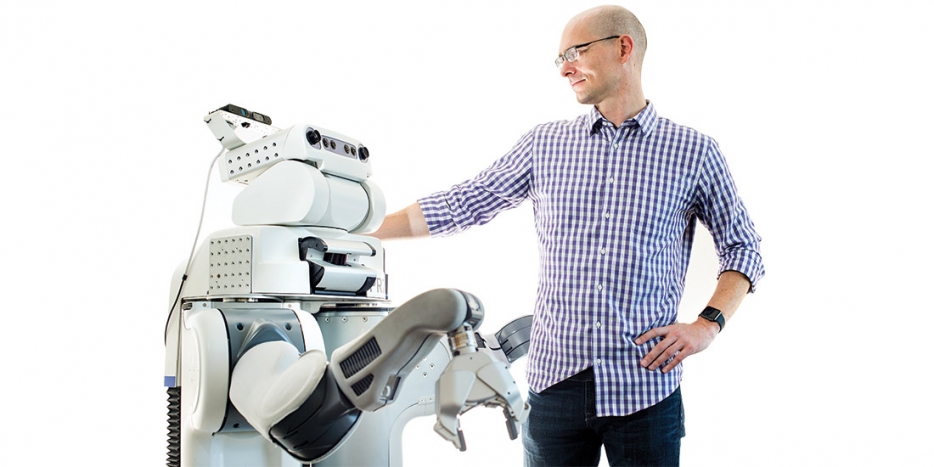

BRETT with EECS professor Pieter Abbeel. (Photos by Noah Berger)

BRETT with EECS professor Pieter Abbeel. (Photos by Noah Berger)Q+A with BRETT

Born into the PR2 family of personal robots made by the Willow Garage robotics research lab, BRETT joined EECS professor Pieter Abbeel’s artificial intelligence program in 2010, arriving fully grown but with infantile cognition. We asked BRETT (whose formal name is Berkeley Robot for the Elimination of Tedious Tasks) about its educational progress as we watched it learn to assemble a toy airplane. While BRETT has no organs of speech, nearby computer screens displayed its thought processes. Abbeel acted as our translator.

The last time we met, you were spending a lot of time folding towels. You’d gotten it down to 20 minutes. How are things going?

The last time we met, you were spending a lot of time folding towels. You’d gotten it down to 20 minutes. How are things going?

I still fold towels from time to time, and I’m a lot faster now — 90 seconds! But these days I’m concentrating on improving my basic learning ability.

“One of the main things we’ve been looking at is: how can we get a robot to think about situations it’s never seen before? you can’t just execute blindly the same set of motions and expect success.”

– Pieter Abbeel | EECS professor | May 8 | PBS News Hour, on the future of artificial intelligence.

How are you doing that?

My coach, Pieter Abbeel, decided that instead of my spending many, many hours on a specific task in a specific environment, it makes more sense to figure out how to learn a variety of tasks, either by trial and error or by watching humans do them. And to learn in a general way, so that what I learn doing one task can help me with the next. To do this, an approach called deep learning has been key.

How is deep learning working for you?

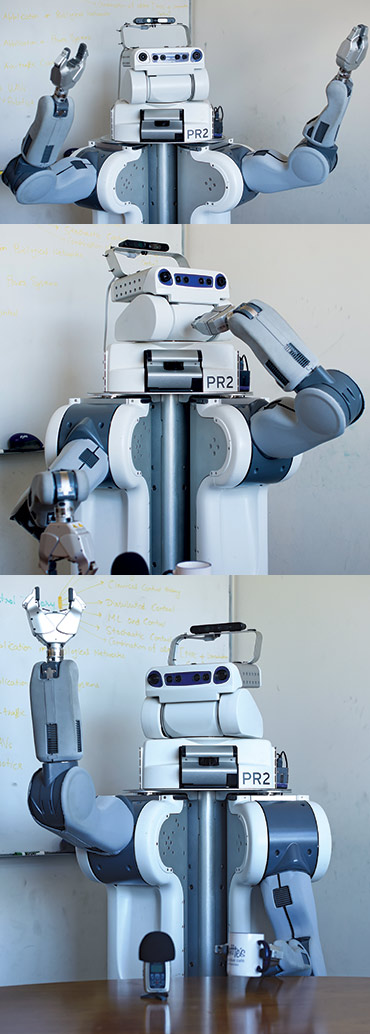

I had to adopt new ways of thinking, but so far so good. In many robots, part of their programming is devoted to figuring out what they’re looking at, while separate programs control their motor system. I integrate and learn these, using my multilayer artificial neural network.

Your what?

A network inspired by the neurons in your brain, except mine aren’t squishy, they’re digital. My brain is in that desktop PC you’re looking at over there; it communicates with my sensors and motors via Wi-Fi. The monitor shows a map of my neural activity, starting with gauging what I’m looking at, then proceeding through the various motor actions needed to complete a task. Like putting this… darn toy airplane together.

Where does the learning come in?

Each artificial neuron is connected to many others, and those connections are “weighted.” Numbers, really. When I make a good move toward accomplishing the task, coordinating the right neurons, the weights of the active connections increase. They decrease when I make a wrong move. Currently my network incorporates 92,000 weighted neural connections.

That sounds like a lot.

You humans have 100 trillion neural connections. Don’t brag about how fast you can fold a towel.

What’s next for you?

Deep learning has already put me on par with traditional programming-heavy approaches, which need a new program written for each task. With further advances in learning, I hope to achieve a much broader ability to generalize. One of these days I want to roll into a house I’ve never seen before and cook, set the table, clean up after dinner, make the beds, take out the garbage — all with no special programming.

And…?

Okay, that too — fold the laundry.